Search the Community

Showing results for tags 'render'.

-

This was discussed briefly on Discord some time ago, I wanted to bring it up here as well for consistency. I don't believe it's an emergency but do consider it an important change especially later down the road, as Linux is slowly moving away from x11 with many distros already going full Wayland by default. In my case I'm pretty much waiting for KDE Plasma to fix a few bugs left with the DE before permanently switching from X11 to wayland too, I might be able to make use of it rather soon if they do. With the new input and rendering system introduced after 2.09 and available for testing in the dev builds (GLFW) we're on our way to having a Wayland compatible build of our engine. Meaning the engine is able to render natively to the WL pipeline, without having to go through the fake x11 server simulated by the Wayland session for compatibility with X exclusive apps. This not only offers proper compatibility for Wayland users, but may improve performance on various fronts which was one of the goals of the new rendering framework. From what I remember @cabalistic telling me, we can't have the same engine for both x11 and Wayland: It must be compiled against different system packages to produce one version or the other. For Linux users the installer may need to offer two engine binaries in this case, or an option to pick which version you'd like to install if that's better. Other than that I understand it should be able to produce in theory, as SDL2 and GLFW both offer Wayland compatible libraries to compile the engine against. I'm not familiar with the C++ code in the slightest so I'll let the experienced developers complete this with the proper technical additions.

-

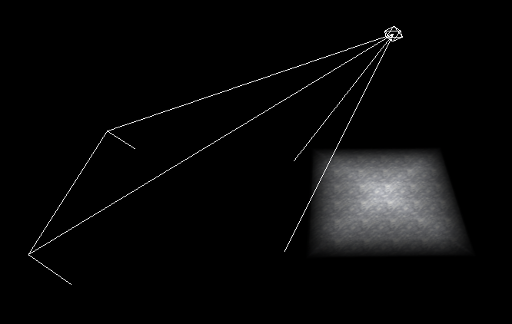

While doing some render system refactoring I noticed that projected lights were not rendering correctly in lighting preview mode, and thought it was something I had broken until I regressed to earlier revisions and discovered that it has actually been broken for some time (in fact I tried checking out revisions from 3 years ago and the problem still reproduced). Projected lights are something of a "bug trap" because I think most mappers don't use them or the DR lighting preview very much and therefore they don't get a lot of testing. The behaviour I see is that while the rendered light projection obeys the shape of the frustum (i.e. the light_up and light_right vectors), it seems to ignore the direction of the light_target vector and always points the light straight downwards. The length of the target vector has an effect (on the size and brightness of the light outline on the floor), but the direction is ignored. Note that the problem only applies to the rendered light itself. The frustum outline appears to be correct (as it is handled with different code). I believe the problem is with how the light texture transformation is calculated in Light::updateProjection(), although I can't be sure. I will make an effort to debug this although projective texture transformations are near the limit of my mathematical abilities (I can understand the general concept and probably figure out the process step-by-step by taking it slowly), but might have to ask @greebo for assistance if the maths becomes too hard. Another approach is to try and revert the relevant code back to when (I think) it was working correctly after I initially got projected lights working, although that might have been 10 years ago or more so a straight git bisect isn't practical.

- 21 replies

-

- 3

-

-

- lights

- darkradiant

-

(and 1 more)

Tagged with:

-

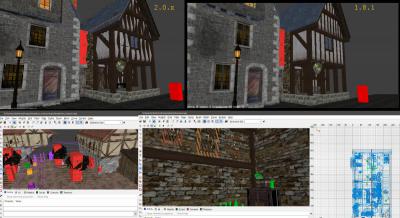

Hey guys. I recently felt the urge to get back into DR, but have since built a new pc and migrated to windows 10. With the wx widgets versions of dark radiant I am experiencing a strange rendering glitch resulting in edges being distorted in a seesaw like pattern, among other things (see attachment). This bug does not occur on the GTK+ versions (tested with 1.8.1). I dug through the forum and bug tracker without results, but I am hesitant to file a bug report as I tend to overlook things and development has been on the back-burner anyway. Also the error may be caused by a myriad of other things, like wxwidgets' opengl render context, my gpu, windows 10, etc. (If you think it's a good idea I'll create a bug report.) So I was wondering if you guys have experienced similar issues and thus have any idea what causes this behaviour and/or know a fix (apart from using DR 1.8.1, obviously ;~). Relevant specs: OS: Windows 10 Pro GPU: Radeon R270 w/ 4GB VRAM Thanks in advance! PS: The render distance is also quite low, is that normal? PPS: tdm itself looks just fine, in case that matters.

-

I've got an Nvidia GTX670 and I'm wondering what affect the Nvidia application settings via the Control Panel has on TDM render quality. In TDM everything is maxed - 16x AA and TA with the resolution set to my monitor's native 1440x900. I then did a few tests using the following settings on TheDarkMod.exe in the Nvidia Control Panel as follows: Default - nothing changed - everything left on "global setting" Only one setting changed - forced 32 CSAA - presumably this overrides the 16x setting in game All application settings overridden and set to their max I took a screenshot from a saved game on each setting and zoomed in 200% so you can see better what's going on (zoom out to 50% in your browser to see the images to original scale). Look to the far right of the image to see the biggest differences. Results are shown here: It's subjective but my feeling is that either the default setting or 32CSAA gives the best results while everything maxed looking the most rough. If you keep your eye on the lower edge of the black step, you can see jagged black and white edges on the maxed settings. Perhaps the forced AA and TA isn't actually working for some reason even though we've told it to max. The 32CSAA setting appears to smoothen everything overall while losing detail which you may or may not prefer on the whole. I may need to play with default and 32CSAA to get a feel for them before deciding which I prefer.