vozka

Member-

Posts

136 -

Joined

-

Last visited

-

Days Won

1

Everything posted by vozka

-

Yeah, I don't think it needs to be removed.

-

Fan Mission: Seeking Lady Leicester, by Grayman (3/21/2023)

vozka replied to Amadeus's topic in Fan Missions

Damn, what a crazy mission. One issue with missions like this is that sharing screenshots that would accurately represent it would ruin the experience. So if anybody is reading this and considering whether to play it or not: this is very much not a standard city/mansion mission. Obviously I was blown away. My favorite moment was wondering "Are these just decorations or will I be able to jump onto them? Surely it's just a decoration." And it wasn't. I bet I wasn't alone in this. The story was a bit confusing in places (the details like family issues, not what was overall happening), but what's important is that the mission was not. It was huge and there was a lot to do, but it flowed well. What helped was that finding stuff in "act one" helped me understand the layout of things in "act two", that in turn helped me find which areas I likely missed in "act one", and I was then free to take my time and return to them. That's a concept that worked really well because the added context made revisiting the old areas interesting again, something that I don't believe any other mission has achieved to do for me. I did have to go through this thread to find some of the secrets, but that's because I generally don't replay missions and wanted to get the (mostly) full experience from my one playthrough. It did seem at one point that the mission could be completed when the player has seen maybe 50% of it, which was a bit strange, but I guess most people here would not do that anyway. -

I've never actually seen the insides of an elevator but from what I know about electronics in similar settings from a few friends who did some work in the field, my guess is that it's an ARM system on a chip and it's quite possible that it runs Linux. And it's highly likely that it's separated from a chip that's taking care of the actual elevator movement, so you probably couldn't use the buttons for input. The reason for this being that ARM SoCs able to run linux are really not that expensive nowadays, whereas developing custom systems outputting graphics with a cheaper weaker chip has a large upfront cost. And if you're using a universal OS like Linux, you can keep the software side the same if the ARM chip you're using goes out of production and you need to switch to a new one. If these assumptions are true, running Doom on it would be easy provided there's some input method.

-

This is getting a bit ridiculous. Don't you think you should tone down the quality a bit? It's almost obscene.

-

2.10 Crashes - May be bow \ frontend acceleration related

vozka replied to wesp5's topic in TDM Tech Support

I'm pretty sure this happened to me at least once as well, but not every time the crash happened, if this brilliantly specific information helps any. It was not in the mission mentioned above (The Lieutenant 2), it was in various different missions. -

As far as I know ChatGPT does not do this at all. It only saves content within one conversation, and while the developers definitely use user conversations to improve the model (and tighten the censorship of forbidden topics), it is not saved and learned as is.

-

I didn't want to spam this thread even more since I wasn't the one who was asked, but since you already replied: img2img does not work well for this use case as it only takes the colors of the original image as a starting point. Therefore it would either stay black & white and sketch-like or deviate significantly from the sketch in every way. Sometimes it's possible to find a balance, but it's time consuming and it doesn't always work. This probably used Control Nets, specialized addon neural nets that are trained to guide the diffusion process using specialized images like normal maps, depth maps, results of edge detection and others. And there's also a control net trained on scribbles which is what I assume Arcturus used. It still needs a text prompt, the control net functions as an added element to the standard image generation process, but it allows to extract the shapes and concepts from the sketch without also using its colors.

-

Trying to bring this thread back to the original topic. Had ChatGPT 4 generate ideas for a game. I chose 7-day roguelike because it's supposed to be simple enough. I would like to participate in the 7-day roguelike contest. It's a game jam where you make a roguelike game in 7 days - some preparation before that is allowed, you can have some basic framework etc, but the main portion of work is supposed to be done within the 7 days. Therefore it favors games with simple systems but good and original ideas. Some of the games contain "outside the box" design that stretches the definition of a roguelike. Please give me an idea of a 7-day roguelike that I could create. Be specific: include the overall themes and topics, describe the game world, overarching abstract ideas (what is the goal of the player, how does the game world works, what makes it interesting...) and specifics about gameplay systems. Describe how it relates to traditional roguelike games or other existing games. This is really not bad and after some simplification I could actually see it work, though I don't know if the mechanic is interesting enough. I had it generate two more. One was not roguelike enough (it was basically something like Dungeon Keeper), the other was a roguelike-puzzle with a time loop: you had to get through a procedurally generated temple with monsters, traps and puzzles in a limited amount of turns, and after you spend those turns, you get returned to the beginning, the whole temple resets and you start again, trying to be more efficient than last time.

-

The issue with this argument is that the process of training the neural network is not in principle any different than a human consultant learning from publicly available code and then giving out advice for money. The only obvious difference being that GPT is dramatically more efficient, dramatically more expensive to train and cheaper to use. This difference may be enough to say that LLMs should be somehow regulated, but I don't see how it could be enough to say that one is OK and the other is completely unethical and disgusting. Isn't the issue with LLMs that they don't give credit to the material that they were trained on? How is then any reputation tarnished? Or do you mean tarnishing somebody else's reputation by generating libelous articles etc.? That may be a problem, but I don't see the relation to the fact that training data is public. As far as I know there is some legal precedent saying that training on public texts is legal in the US. It might change in the future because LLMs probably change the game a bit, but I don't believe there's any legal reason why they should receive any bills at this moment. They also published some things about training GPT-3 (the majority is Common Crawl). Personally I don't see an issue with including controversial content in the training dataset and while "jailbreaks" (ways to get it to talk about controversial topics) are currently a regular and inevitable thing with ChatGPT, outside of them it definitely has an overall "western liberal" bias, the opposite of the websites you mention.

-

I agree with what you're saying. My biggest problem with this ethics debate is that there seems to be a lot of insincerity and moving the goalposts by people whose argument is simply "I don't like this" hidden behind various rationalizations. Like people claiming that Stable Diffusion is a collage machine or something comparable to photobashing. Or admitting that it's not the case but claiming that it can still reproduce images that were in its training dataset (therefore violating copyright), ignoring that the one study that showed this effect was done on an old unreleased version of Stable Diffusion which suffered from overtraining because certain images were present in 100+ copies in its dataset, and even in this special situation it took about 1.7 million attempts to create one duplicity, never reproducing it on any of the versions released for public use. I also dislike how they're attacking Stable Diffusion the most - the one tool that's actually free for everyone to use and that effectively democratizes the technology. Luddites at least did not protest against the machines themselves, but against not having the ownership of the machines and the right to use it for their own gain. They're just picking an easy target. I don't believe there's any current legal reason to restrict training on public data. But there are undoubtedly going to be legal battles because some people believe that the process of training a neural network is sufficiently different from an artist learning to imitate an existing style that it warrants new legal frameworks to be created. I can see their point to some degree. While the learning process in principle is kind of similar to how a real person learns, the efficiency at which it works is so different that will undoubtedly create significant changes in society, and significant changes in society might warrant new legislature even it seems unfair. The issue is I don't see a way to do such legislature that could be realistically implemented. Accepting reality, moving forward and trying to deal with the individual consequences seems like the least bad solution at this moment.

-

Seems like most threads about this topic on the internet get filled by similar themes. ChatGPT is not AI. ChatGPT lied to me. ChatGPT/Stable Diffusion is just taking pieces of other people's work and mashing them together. ChatGPT/Stable Diffusion is trained against our consent and that's unethical. The last point is kind of valid but too deep for me to want to go into (personally I don't care if somebody uses my text/photos/renders for training), the rest seem like a real waste of time. AI has always been a label for a whole field that spans from simple decision trees through natural language processing and machine learning to an actual hypothetical artificial general intelligence. It doesn't really matter that GPT at its core is just a huge probability based text generator when many of its interesting qualities that people are talking about are emergent and largely unexpected. The interesting things start when you spend some time learning how to use it effectively and finding out what it's good at instead of trying to use it like a google or wikipedia substitute or even trying to "gotcha!" it by having it make up facts. It is bad at that job because neither it nor you can recognize whether it's recalling things or hallucinating nonsense (without spending some effort). I have found that it is remarkably good at: Coding. Especially GPT-4 is magnificent. It can only handle relatively simple and short code snippets, not whole programs, but for example when starting to work with a library I've never used before it can generate something comparable to tutorial example code, except finetuned for my exact use case. It can also work a little bit like pair programming. Saves a lot time. Text/information processing. I needed to write an article that dives relatively deep into a domain that I knew almost nothing about. After spending a few days reading books and articles and other sources and building a note base, instead of rewriting and restructuring the note base into text I generated the article paragraph by paragraph by pasting the notes bit by bit into ChatGPT. Had to do a lot of manual tweaking, but it saved me about 25% of time over the whole article, and that was GPT-3.5. GPT-4 can do much better: my friend had a page or two full of notes on a psychiatric diagnosis and found a long article about the same topic that he didn't have time to read. So he just pasted both into ChatGPT and asked whether the article contains information that's not present in his notes. ChatGPT answered basically "There's not much new information present, but you may focus on these topics if you want, that's where the article goes a bit deeper than your notes." Naturally he went to actually read the whole article and check the validity of the result, and it was 100% true. General advice on things that you have to fact check anyway. When I was writing the article mentioned above, I told it to give me an outline. Turns out I forgot to mention one pretty interesting point that ChatGPT thought of, and the rest were basically things that I was already planning to write about. Want to start a startup but know nothing about marketing or other related topics? ChatGPT will probably give you very reasonable advice about where to start and what to learn about, and since you have to really think about that advice in the context of your startup anyway, you don't lose any time by fact checking. Bing AI is just Bing search + GPT-4 set up in a specific way. It's better at getting facts because it searches for those facts on the internet instead of attempting to recall them. It's pretty bad at getting truly complicated search queries because it's limited by using a normal search in the background, but it can do really well at specific single searches. For example I was looking for a supplement that's supposed to help with chronic fatigue syndrome and I only knew that it contained a mixture of amino acids, it was based on some published study and it was made in Australia. Finding it on google through those things was surprisingly difficult, I'm sure I could do it eventually, but it would certainly take me longer than 10 minutes. Bing AI search had it immediately.

-

Man, that was WAY bigger than I expected it to be. Huge, even. Overall I liked it and it had lots of character, which I appreciate more than perfect polish. It's great when authors try something new. I liked the pagans. The pagans are great. I would like to see more pagans. The "parallel worlds" created by pagan shortcuts vs the rest of the city were great as well. Story was really nice. The keys were a solid idea. I wouldn't want all (or most) missions to be like that, but once in a while, why not, it provides a different experience, which is good. The only issue I have with it is that I think you may have underestimated how trivial it is to overlook a key even if it's not hidden in any way, and I think people have biases about where and when to seek keys from other missions. I think that from past experience (especially in various city missions) I'm not used to looking for relatively crucial items in areas that are not necessary for finishing a quest objective. Don't know if this is really your fault, but I'd say I'm not the only one this happened to. For example I missed the gate key completely because I simply forgot to look around in that particular small piece of the mission, I just discovered a different crucial key (that let me leave the area) pretty much right next to it and I didn't see any mission context that would suggest "this is the area where the map finally opens up and you should find the gate key". The key is not necessary to finish, but not having it makes the final part a lot less fun. I liked that some things that happened were pretty unique. Raiding the chief builder's quarters by just running through everything was an absolute carnage and funny as hell: Now some criticism: First one is really superficial, but I didn't look at your mission for a while because you chose one of the most generic names possible and I think I subconsciously assumed the mission itself is not going to be a great effort either (in hindsight: LOL!). If I had to say one single thing I actually dislike about the mission, it's the name. Some details here and there, like a door in the Ox 2nd floor (I think? across the street from the hypos) completely missing and only showing a wall and a levitating doorknob in the doorframe, some levitating vegetable garden on the farm or misaligned branches here and there, nothing important. It was also possible to look out of bounds in several places, but the only one where I think it was an issue was in the square where the pub is, next to the Builder's building - there's a scaffolding that you climb on, you can look through a semi-open window and there's a ledge nearby that you can climb on. For me the place screamed "climb onto this and see where it goes", only to show me an out of bounds view and a z-fighting texture. It was a tad too long and the pacing around the end was not great. Length itself would not be an issue I think, but after finishing the main mission it took me another hour to complete the medium loot objective. I approached the grand finale, fulfilled the main objective, and instead of victoriously arriving with the cure I had to roam around for an hour looking for places that I missed. And I did miss some, obviously, but that's simply often going to happen with a mission like this. That was more tiring than pleasant. The biggest problem for me: Lighting. Seems like there was light shining through walls everywhere. Light gem was shining in a few places that seemed dark. Places that should have been dark (no windows or other light sources) weren't and instead seemingly contained invisible light sources that didn't make sense (the room on the ground floor of the bleak house was like this I believe). I noticed that some light fixtures continued to emit a little bit of light even after being extinguished, which is not an issue, I'm talking about places where I could not find such rationale for the light. I'm surprised that nobody complained about this yet, almost makes me wonder if there's something wrong with my installation, so I took a few screenshots to show what I mean: https://imgur.com/a/JuO9sF5 If this is really how it's supposed to be, then this is the one thing I'd personally focus on improving in your next mission, which I'm sure is going to be great.

-

On one hand this is probably true, but it's a different situation because in the 90s or early 00s they'd be revolutionary whereas today they're merely iterative. Doesn't mean they're bad, but I think it's reasonable to be disappointed that there's way less innovation in some segments (clarifying this because I know it's not everywhere) of the market now, with the exception of aspects like graphics which are rarely used to innovate the actual gameplay. I also think that despite the "if they were released in the 90s" claim probably being true, nothing as good as Deus Ex with its essence of 90s zeitgeist or Thief with its sprawling nonlinear levels and a unique and atmospheric world has been released. I'm usually not complaining because I don't want to spend that much time playing videogames anyway, so my backlog of good and interesting games will always be big enough. But I do find it disappointing how few non-indie companies there are brave enough to try new things, and how often companies that try to iterate on unique and interesting games instead make them simpler and less unique.

-

Fan Mission: In Plain Sight by Frost_Salamander (2022/08/07)

vozka replied to Frost_Salamander's topic in Fan Missions

That was way bigger than I initially expected! Pretty tough too, got stuck a couple times and had to look through this thread. Took me 3 hours to complete and in the end I got 8400 loot, but that was after consulting the hints and finding 3/5 secrets. One thing that frustrated me was that it was sometimes unclear where I'm supposed to be able to jump and where not. The openable window that's opposite the post office (I'm not specific in order to not spoil things, hopefully it's clear) seems like it should be reachable if you climb on the pub sign and lamp on the other side of the street, jump over and grab or jump onto the piece of the wooden beam that's sticking out, but it's not and the beam cannot be stood on or grabbed. Similar situation with the courtyard in front of lord Eton's house - when you first enter through the bottom window, it seems like you could grab the bottom of the top balcony on the left, but you cannot. On the other hand some of the intended jumps had quite narrow outside window sills, so falling down or not jumping far enough was very easy and frustrating. It felt like the space where you can stand up outside of a window, press forward and make a jump was rather tiny. And another problem was that when there were window sills, they were often slightly overhanging, which was blocking me from mantling on them when crouching (I would bump into the overhang instead of mantling on top of it), without making noise. It was rather fiddly. Apart from these issues which affected the smoothness of movement I had a good time. Interesting mission and not simple either. -

Continuing this thread, which I started here. Issue (so you don't have to read it all): In some cases guards walking near you or even bumping into you notice you and make a "huh?" sound, but then they continue walking for a bit until they suddenly turn around and run towards you fully aware. The part that I have a problem is not the delay itself but that they keep walking during it. I think it's unrealistic (even in the context of a game like this) and confusing - it doesn't send the message of what's happening clearly. It was proposed that I show an example of what I mean, which took me merely 10 months, and I bring not one but two whole examples. In both cases the guards physically bump into me. I think this also happens if the guard notices the player from up close because the player is just slightly lit, without the guard physically bumping into the player, but I have not encountered it in the last couple missions I played, so no proof/example there. Proposed solution (so I don't come empty handed): After saying "huh?" the guard stops and looks at the player, or maybe better, starts looking around in the general direction of the player. Since this seems to be triggered by bumping into the player, making one step back after stopping and turning to face the player would also seem realistic to me (at least that's what I'd do after bumping into something in the dark). I gotta say without sound those videos are kind of funny. I imagine the guard walking behind the corner and suddenly realizing "CRAP! WAS THAT A BLOODY THIEF??"

-

Nice site for search an download AI created textures

vozka replied to Zerg Rush's topic in Art Assets

It also contains assets from textures.com, which is in violation of their license, and some wiki page afaik still encourages their usage. That of course doesn't excuse doing something similar again, but if directly violating a license of an asset that should be traceable back to its source didn't cause any issues till now, the chance that using assets which at this moment are completely legal and very difficult to trace would cause any legal issues is miniscule. -

Nice site for search an download AI created textures

vozka replied to Zerg Rush's topic in Art Assets

I wouldn't worry about copyright, there's some precedent for training text AIs and I assume that any successful lawsuits will be more about deepfakes, generating images that imitate copyrighted works of living artists etc. Certainly nobody is going to care about textures in a free game, especially if they're not specifically labeled as "LOOK, WE MADE THIS WITH AI!". I understand that the question of caring or not caring about it may be more theoretical/ideological than practical, but I'd say there are ideological resons to not care about these things as well, so I don't. I think that the popular Stable Diffusion GUIs can generate seamless images and therefore be usable for this (the most popular one by Automatic1111 can), and there may be some pretrained models specifically for game textures as well. I don't know what's the status of Radeon support, but those with NVidia cards can probably run it locally, after optimizations it needs less than 4 GB VRAM to run. I run it on a 6 GB GTX1060 without issues. And it's a ton of fun, although getting useful textures out of it won't be easy. -

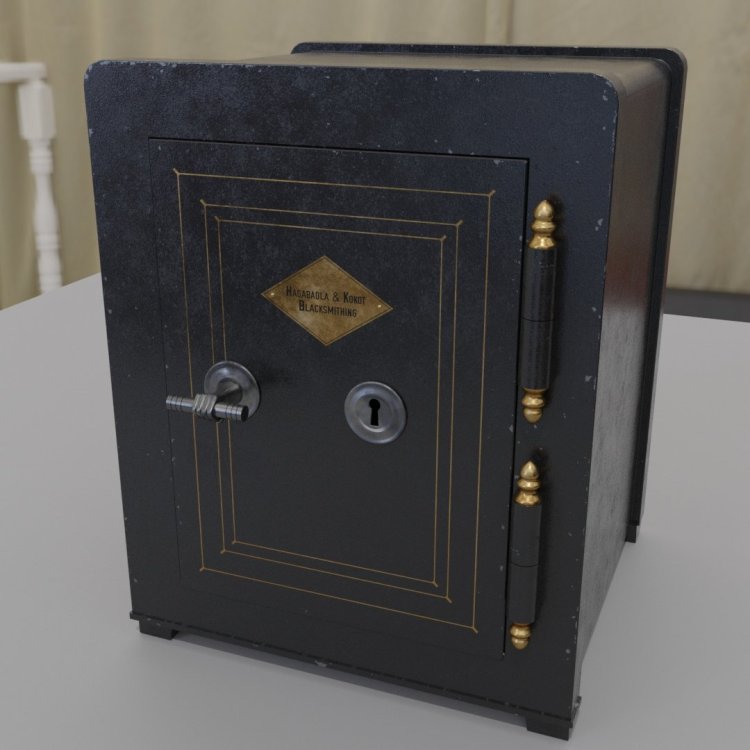

Added some chipped paint to the painted version. I'm the type of person who hates doing things by hand so I spent about 5x more time learning how to do it procedurally (surprisingly there are a few catches), but I'm pretty happy with it and I think that makes this one worn enough.

-

If one out of ten people notice, that's good enough for me, the only issue is if I'll be able to make it low-poly enough to not strain the engine. I tried making it low-poly and bake normals in a quick & dirty way, so there are errors and I'll have to try a couple times, but so far I got the whole handle down to 170 tris. Seems like with a shadow mesh, which I guess could be half that, it would be good enough.

-

Grunge and distressing is definitely something I have some trouble with, but you're right. Regarding the inlay, good point - I didn't really do that because this is still PBR with metalness and everything and the materials will have to be done slightly differently for TDM anyway. While this is flattering, it's not that difficult to get a semi-photorealistic render in a path-tracing renderer using physically based materials and models with a virtually unlimited number of polygons. Adapting that into the game is another matter I created two variants. First is a black paint (again, no distressing yet) that imo looks a bit better, but if I recall correctly it's possible to have models with more than one set of materials, so it would be best to have both. Second is a new handle what apparently was semi-common in the victorian times and I thought it was pretty funny. No idea how it will look after low-poly retopo and baking though.

-

Awesome, that would be great. Regarding the separate models, that's what I assumed, so that's how I did it. I'll work on it a bit more first.

-

Well if this is deemed good enough, I'm probably going to need somebody to help with the interactivity anyway - door opening and the lock/moving the handle. I'm not a map maker and I'm not sure I'll have the time to learn that (it's harder to justify than practicing modelling, which I need for other things too). Providing a version without the keyhole would not be a problem for me of course. I have an alternative material and handle in mind as well, it would be nice to have more than one option.

-

Attempting to make a model of a safe for fun and practice. So far it's just hi-poly with PBR materials. My modelling skills are quite narrow and this is outside their scope, so I'm looking for criticism, mostly: - Is the overall style acceptable, regarding apparent time period etc? (apart from the text in the logo, that's a placeholder and I imagine I'd use some decorative victorian font plus some actual believable names) - Would the quality be good enough like this? - Do you have suggestions about how to improve it?

-

An article about using Stable Diffusion for image compression - storing the data necessary for the neural network to recreate the image in a way that would be more efficient than using standard image compression algorithms like jpeg or webp: https://matthias-buehlmann.medium.com/stable-diffusion-based-image-compresssion-6f1f0a399202 It's just a proof of concept since the quality of the model is not there yet (and the speed would be impractical), it can't do images with several faces very well for example. But it's very interesting because it has completely different tradeoffs than normal lossy compression algorithms - it's not blocky, it doesn't produce blur or color bleed, in fact quite often it actually keeps the overall character of the original image almost perfectly, down to grain levels of a photograph. But it changes the content of the image, which standard compression does not do.

(-1161.431517.18-282.5).thumb.jpg.68a10f813d41f0ab83e32501824e11e4.jpg)