-

Posts

1772 -

Joined

-

Last visited

-

Days Won

47

Arcturus last won the day on August 30

Arcturus had the most liked content!

Reputation

733 LegendaryContact Methods

-

Website URL

http://

-

ICQ

0

Profile Information

-

Gender

Male

-

Location

Poland

-

Interests

3d

Recent Profile Visitors

8239 profile views

-

Priest model's skinning was horribly mangled so I fixed it in the repository and on Sketchfab. It's still bad, but at least not fubar. The collar should be stiffer now and I glued the stole to the legs. It doesn't look great in idle animations but better when walking.

- 3 replies

-

- 2

-

-

- pbr

- physically based rendering

-

(and 3 more)

Tagged with:

-

I fixed some issues with builder priest model. Plus made some small changes to the rig.

-

If someone's interested, here's the .blend file with textures packed. I put in short comments in the nodes that describe what they're doing. When in 'render' or in 'material preview' modes, you can preview each node on the 3d model by connecting it to the material output. Ctrl+Shift+LeftMouse should do that automatically in newer versions of the program. At first I thought I would use Substance Painter, as this is the most popular app for texture maps creations. I have old version I bought on Steam, but I have some problem running it. For this purpose painting app isn't really required. In principle you could prepare all textures in an image editor. I made the masks needed in Gimp. I made one mask for the boot in Blender, because it was more convenient. However in case of complex models it is useful to have a render preview. In vanilla Blender, exporting texture maps is a bit cumbersome. There's a "TexTools" plugin that makes this a bit faster. There are also paid plugins that try to emulate the Substance Painter interface. You can see that some materials have additional stages: clear coat, sheen and subsurface scattering for skin. I didn't make any special textures for those. I had to invert green channel of the normalmaps, as Blender uses different convention from Darkmod. All maps except "color" maps use "Non-color" space. Something worth remembering if one wants to work in Blender. I used compressed .dds textures. There used to be a separate repository for original uncompressed Darkmod textures. Some may even have original sources with layers? That would help editing them. I think that ordinary environment textures like wooden floors, or stone walls wouldn't be a problem when transitioning to a PBR model. More problematic are textures with multiple different surfaces, like wooden doors that have metal hinges for example, because they require manual masking. It took me a moment to realize that the boots are made of leather bottom and metal top. There's a certain level of artistic interpretation. What material is the robe exactly. Is it very rough, or a little shiny? And how shiny should the metal parts be, etc.

- 3 replies

-

- pbr

- physically based rendering

-

(and 3 more)

Tagged with:

-

I tried this once years ago with a guard NPC. I noticed there have been ongoing discussions about PBR in Darkmod. So I put the builder priest on Sketchfab: https://skfb.ly/pqrrC I enabled model inspector, so you can take a look at all the texture maps. I haven't remade anything from scratch, mostly I brightened the metal parts of the diffusemaps and made metalness and roughness maps. And here's rendered in Cycles.

- 3 replies

-

- 4

-

-

- pbr

- physically based rendering

-

(and 3 more)

Tagged with:

-

I added a .blend file with a female character. I made some changes to both - I removed some drivers and added some new constraints to bones.

-

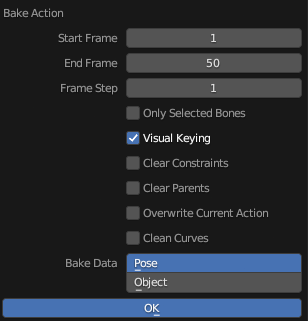

I tested this addon for importing and exporting md5.mesh and md5.anim in Blender: https://github.com/KozGit/Blender-2.8-MD5-import-export-addon It works with Blender 3.6.15 which is the latest version in the 3.0 series. It doesn't work with Blender 4.2. It seems that the culprit is the change in the 4.0 series from bone layers to bone collections. Here's an updated .blend file with male NPC animations. .blend file with a female model Like before: use armature_control to animate the model when you're done, select the tdm_ai_proguard armature object and Bake Action to Pose; select Visual Keying for the exporter to work you need to select both the tdm_ai_proguard and a mesh object that's being animated, e.g. proguard_armor

-

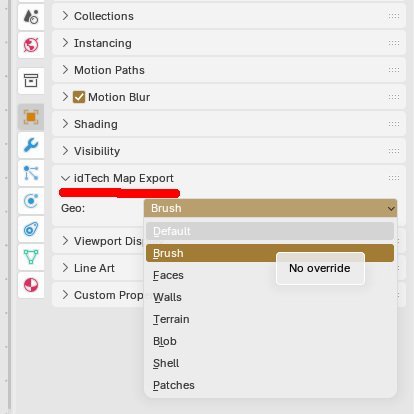

One more thing I noticed is that there is a tab in Object Properties where user can specify type of geometry for each object individually. This setting will override global export setting. This way one can export multiple objects at once, each as a different type.

-

Deform expand doesn't work with diffusemaps, unless you want to use shadeless materials. Here's animated bumpmap. With heathaze, to better sell the effect: table customscaleTable { { 0.7, 1 } } deform_test_01 { nonsolid { blend specularmap map _white rgb 0.1 } diffusemap models\darkmod\props\textures\banner_greenman { blend bumpmap map textures\darkmod\alpha_test_normalmap translate time * -1.2 , time * -0.6 rotate customscaleTable[time * 0.02] scale customscaleTable[time * 0.1] , customscaleTable[time * 0.1] } } textures/sfx/banner_haze { nonsolid translucent { vertexProgram heatHazeWithMaskAndDepth.vfp vertexParm 0 time * -1.2 , time * -0.6 // texture scrolling vertexParm 1 2 // magnitude of the distortion fragmentProgram heatHazeWithMaskAndDepth.vfp fragmentMap 0 _currentRender fragmentMap 1 textures\darkmod\alpha_test_normalmap.tga // the normal map for distortion fragmentMap 2 textures/sfx/vp1_alpha.tga // the distortion blend map fragmentMap 3 _currentDepth } } At the moment using md3 animation is probably the best option for animated banners:

-

You can't seem to be able to mix two deform functions. You can have deform mixed with some transformation of the map - scale, rotate, shear, translate: deform_test_01 { nonsolid deform expand 4*sintable[time*0.5] { map models/darkmod/nature/alpha_test translate time * 0.1 , time * 0.2 alphatest 0.4 } } deform_test_01 { nonsolid deform expand 4*sintable[time*0.5] { map models/darkmod/nature/alpha_test translate time * 0.1 , time * 0.2 alphatest 0.4 vertexcolor } { blend add map models/darkmod/nature/alpha_test rotate time * -0.1 alphatest 0.4 inversevertexcolor } }

-

Here's one using deform move: deform_test_01 { description "foliage" nonsolid { blend specularmap map _white rgb 0.1 } deform move 10*sintable[time*0.5] // *sound { map models/darkmod/nature/grass_04 alphatest 0.4 } } Here's one using deform expand: deform_test_01 { description "foliage" nonsolid { blend specularmap map _white rgb 0.1 } deform expand 4*sintable[time*0.5] { map models/darkmod/nature/grass_04 alphatest 0.4 } } Both not working well with diffusemaps. These are actually moving vertices, but all at once.

-

That demo used MD5 skeletal animation. That's not the best solution for large fields of grass. Funny, I actually just made this: It's a combination of func_pendulum rotation and deform turbulent shader applied to some patches. With func_pendulum speed and phase can vary for more randomness. Here's the material: deform_test_01 { surftype15 description "foliage" nonsolid { blend specularmap map _white rgb 0.1 } deform turbulent sinTable 0.007 (time * 0.3) 10 { blend diffusemap map models/darkmod/nature/grass_04 alphatest 0.4 } } It doesn't really deform vertices but rather the texture coordinates. One small problem is that when applied to diffusemaps, only the mask gets animated - the color of the texture stays in place: Whereas if you use any of the shadeles modes, both the color and the mask get distorted, as you would expect:

-

Noclip has a separate channel where they put old press materials.

-

That's the idea. BSP should be simplified when compared to the visible geometry. But if it's too simple, then the visible geometry has to conform. I always looked for a way of building less boxy, angular maps and more organic ones. Keep in mind that those are experiments. I'm looking for the limits of the engine, I'm not saying that the whole map should be this complex. I also think that with this script, building in Blender is a viable option. Back in the Blender 2.7 days I remember I had to use two scripts - one for exporting to Quake .map and one to convert to a Doom 3 .map format. And the geometry would drift apart. It was pain in the ass. This is better.